For a while, my mpssh runs were getting slow. I use it daily against about 1400 Linux hosts, and a trivial true command across 999 parallel SSH sessions had drifted to roughly two minutes. During the run, my desktop would get a sharp CPU spike, and the mpssh executions started interfering with interactive work. I started wondering whether newer OpenSSH packages, the growing host count, or even ssh-agent were to blame.

It turned out that the biggest win was splitting my 2.1 MB ~/.ssh/known_hosts into one small file per host. The ssh_config(5) documentation says that UserKnownHostsFile accepts runtime tokens such as %h, so a path like ~/.ssh/known_hosts_single/%h is valid.

I did not prove the exact lookup algorithm OpenSSH uses internally, so I will not speculate too much there. But the benchmark was clear enough: once I stopped feeding SSH a monolithic known_hosts file, the runtime dropped from about two minutes to about thirty seconds with the same host list and the same default 50 ms delay between forks.

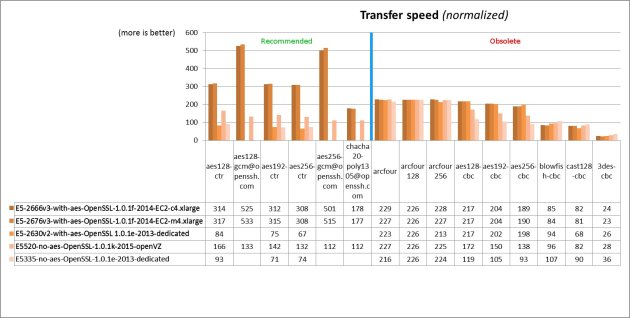

Benchmark Summary

| Setup | Best time | What it showed |

|---|---|---|

Baseline, default SSH behavior, monolithic known_hosts, parallelism of 999 | 2m03.482s | This was the original pain point. |

Per-host known_hosts, default 50 ms delay | 26.840s | About 4.6x faster without any aggressive client-side tuning. |

| Same per-host setup, but 0 ms delay | 16.228s | Faster again, but much harsher on local CPU. |

| Per-host setup plus agent/key experiments | Roughly 27-32s at 50 ms | Disabling ssh-agent or switching RSA to Ed25519 did not materially change the result. |

The spawn delay also mattered, but in a different way. Reducing it from the default 50 ms to 5 ms or 0 ms shaved off more seconds, but it also pushed much harder on local CPU. In one 0 ms run, CPU idle dropped to 0% for about five seconds. That is why I kept the default 50 ms in normal use. Getting down to about 27 to 30 seconds while keeping the machine responsive was already good enough.

I also chased a couple of dead ends. I saw ssh-agent spike to 100% CPU often enough that it looked suspicious, so I tested a temporary passwordless key and also forced IdentityAgent=none. I also tried Ed25519 instead of my older RSA key. Neither changed the overall picture in a meaningful way.

My ~/.ssh/config is also fairly large. I even tried splitting the alias-heavy part into a separate include file of about 78 KB, guarded by a Match originalhost stanza, because mpssh uses the full hostnames and those aliases are irrelevant for the benchmarked hosts. That did not help either. OpenSSH still reads the included file in order to parse it, even if it does not end up matching the current host. I still keep that Match stanza around, though, because it may become useful in the future if OpenSSH ever starts handling this case more efficiently.

# mpssh uses full hostnames, so this alias file is irrelevant hereMatch originalhost ??,???,???? Include config.short-host-aliases

How To Split known_hosts Per Host

I wrote a small helper script for this and put it in the mpssh repository. The script reads hostnames from standard input or from a file, resolves hostnames to IP addresses, extracts matching entries from the monolithic file with ssh-keygen -F, and writes one small file per host into ~/.ssh/known_hosts_single. It also handles custom-port entries such as [git.example.com]:7999.

If HashKnownHosts was enabled in your SSH configuration, converting usually requires a plain-text list of all your servers, because the monolithic file does not contain readable hostnames anymore. If HashKnownHosts was disabled, you can usually extract that list from the existing monolithic known_hosts file with a simple cat and awk pipeline.

Here is the migration flow I used, rewritten with generic hostnames and paths:

mv ~/.ssh/known_hosts ~/.ssh/known_hosts.monolith

mkdir -p ~/.ssh/known_hosts_single

python3 known_hosts_single/convert.py \

--known-hosts-file ~/.ssh/known_hosts.monolith \

--input-file ./servers.list \

--progress

If you want to test a couple of entries first, the script can also read from standard input:

printf '%s\n' example.com '[git.example.com]:7999' 203.0.113.10 | \

python3 known_hosts_single/convert.py \

--known-hosts-file ~/.ssh/known_hosts.monolith \

--progress

Then edit ~/.ssh/config so that SSH uses the per-host files. I explicitly disable GlobalKnownHostsFile because my setup does not rely on a system-wide known_hosts file. If yours does, do not copy that line. I also set HashKnownHosts no, because once the host identity is already visible in the %h filename, hashing the contents of the tiny per-host file no longer buys much. I kept strict host key checking enabled because this was a performance optimization, not a security shortcut:

Host *

GlobalKnownHostsFile none

UserKnownHostsFile ~/.ssh/known_hosts_single/%h

HashKnownHosts no

StrictHostKeyChecking yes

The important part is %h. SSH expands it to the target hostname, so each connection only opens the tiny file for that host instead of making every connection consult one large shared file.

Reproducing The Benchmark

For an apples-to-apples comparison, these are the important commands. I kept -p 999 because that was the clean baseline I measured before and after the change:

# Baseline

time mpssh -p 999 -u root -f ./servers.list true

# Same host list, but with per-host known_hosts files

time mpssh -p 999 -u root -f ./servers.list \

-O 'o UserKnownHostsFile=~/.ssh/known_hosts_single/%h' \

-O 'o StrictHostKeyChecking=yes' \

true

# More aggressive spawning

time mpssh -p 999 -d 0 -u root -f ./servers.list \

-O 'o UserKnownHostsFile=~/.ssh/known_hosts_single/%h' \

-O 'o StrictHostKeyChecking=yes' \

true

If you want to experiment further, mpssh also lets you adjust the delay between SSH forks with -d MSEC. In my case, lower values were useful for benchmarks but not for everyday use because they pushed too much CPU pressure back onto the local machine.

One more thing worth keeping in mind is ControlMaster with ControlPersist. That OpenSSH feature can reuse an already established connection to the same host for later sessions. I have not benchmarked it for this workload, but for repeated connections to the same machines it has the potential to reduce SSH connection setup overhead a lot.

Long story short, if you fan out SSH connections to hundreds or thousands of hosts, do not assume that the network or the private key type is the only thing worth checking. A large known_hosts file can be enough to waste more than a minute and a lot of CPU per batch. Splitting it per host kept host key verification in place and made mpssh feel fast again.